Hello guys,

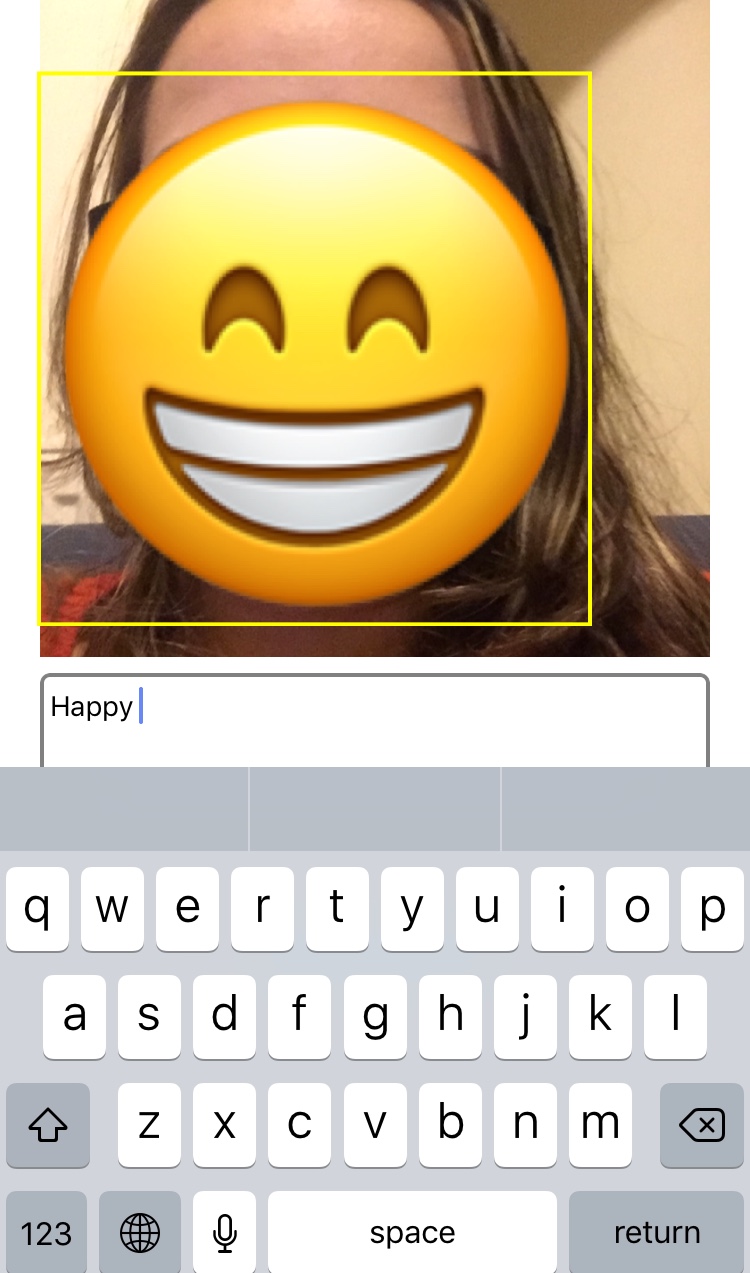

I have been to the #TechKnowday here in London three weeks ago and one of the workshops that I joined was the Machine Learning in IOS Apps. So, in the end we had a face recognition app which showed what was the emotion of the person, if the picture was someone smiling than it should show the smile emoticon and so on.

You can find the slides and follow the explanations here:

https://github.com/costescv/MachineLearning/blob/master/MachineLearning.pdf

Then you will need to clone the repository with the project https://github.com/costescv/machinelearning and download the Sentiment Polarity model here

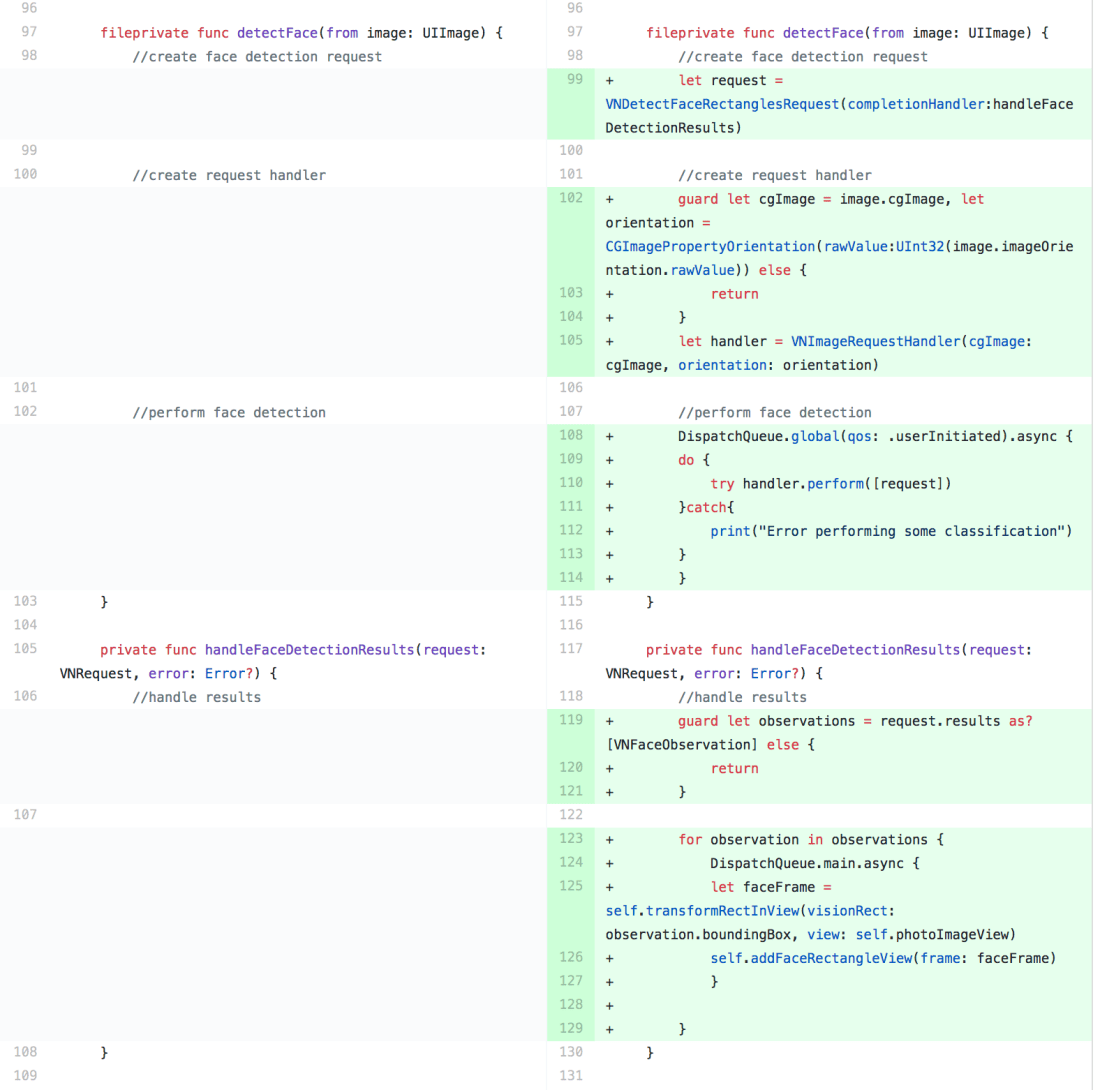

Step 1:

In the ViewController.swift you will need to create the face detection request, the request handler and the face detection action. So you will have something like this:

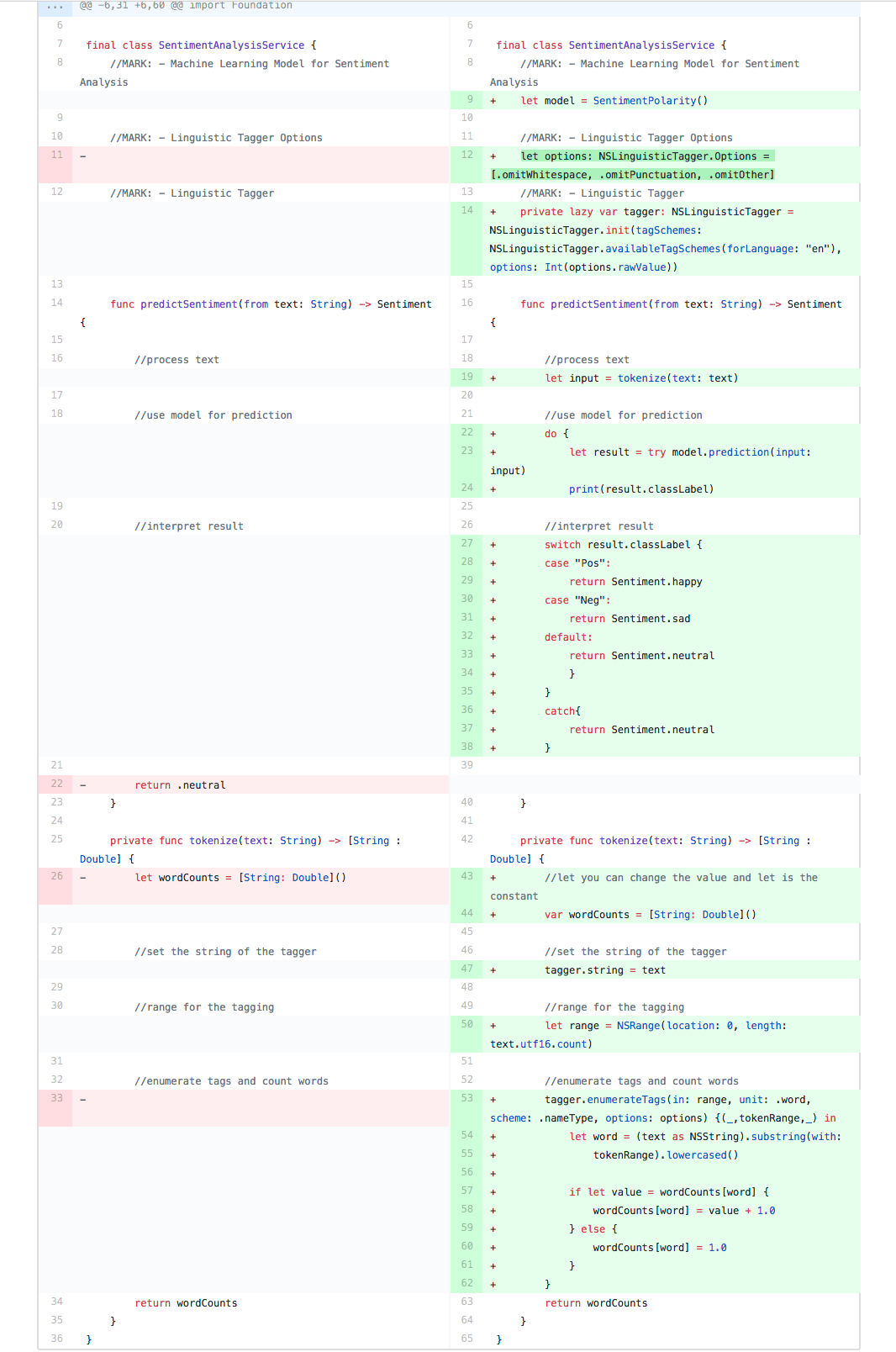

Step 2:

In the SentimentAnalysisService.swift you will need to create the model using the SentimentPolarity, pass the linguistic tagger options and create the input to receive and interpret the input with the sentiment. You can add, remove or change the sentiments in the Sentiment.swift class, but don’t forget to change in this class the sentiment as well.

So, after you build, run the app and type the name of the sentiment with a space in the end, so you should have something like this:

Thanks Vasilica for this workshop !