When working in an Agile team, the feedback loop should be as quick as possible. If you don’t send the feedback at the right time, future bugs could be costly as the feature has already a big amount of code.

What is this feedback loop ?

If you have implemented Continuous integration and have automated tests running after a new commit on your dev environment, you need to report back as soon as possible the result of these tests. This is the feedback loop and you need to know the correct time to report the issue to the dev team.

If your automation is taking too long to run the tests after a new commit, this is a sign that you need to improve your smoke tests, maybe your scope is too long or maybe your automation is taking too long to run for other reason, sleeps, not scalable, etc.

The feedback loop influences how your agile process works and if you are saving or wasting time when developing. Tight feedback loops will improve performance of the team in general, give confidence, save time and avoid costly bug fixes.

Feedback loops are not only about the continuous integration, it is about pair programming and unit tests as well, but this time we will focus on Continuous integration tests.

When you are implementing a new scenario in your automated tests, you want to know ASAP if something you implemented is breaking some other scenario or the same scenario. Same situation when you are developing something related to that feature and you want to know if this new implementation is breaking the tests. It is easier to fix, it is fresh in your mind, you don’t need to wait 30 minutes to know there is a bug when you changed the name of a variable…

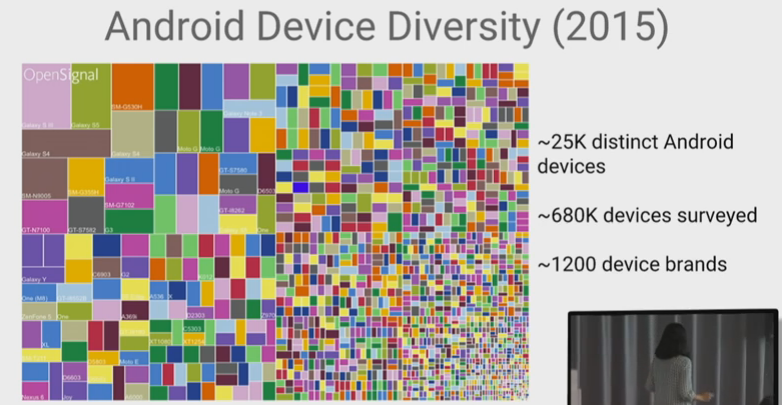

In my personal opinion, if you don’t have parallel tests to check in multiple browsers or mobiles at the same time if something is broken, it is better you focus on the most used browser/mobile, since this is first priority in all the cases.

Use case: 90% users are on Chrome on Desktop, other 5% users are on Firefox mobile, other 5% are on Safari mobile. What is the best strategy ?

After commit:

- Run Smoke tests and all the browsers, take 15 minutes to receive feedback ?

- Run Regression tests and all the browsers, take 40 minutes to receive feedback ?

- Run Smoke tests and only the most used browser, take 5 minutes to receive feedback, leave to run on all the browsers every hour ?

- Run Regression tests and only the most used browser, take 10 minutes to receive feedback, leave to run on all the browsers every hour ?

There is no rule to follow, since in this case you don’t have parallel tests, I would go for the third option. Then, you can focus on the most used browser and leave running the other browsers in a dedicated job each hour. Why not fourth option ? Because you need to keep in mind the business value.

Of course that we need to delivery the feature on all used browsers, but when the time is tight (very often) and you need to deliver as fast as you can, you go for the most business value option and implement the other browsers after. Don’t forget when you automate, you don’t think only about helping the development, but also you think about helping the end users.

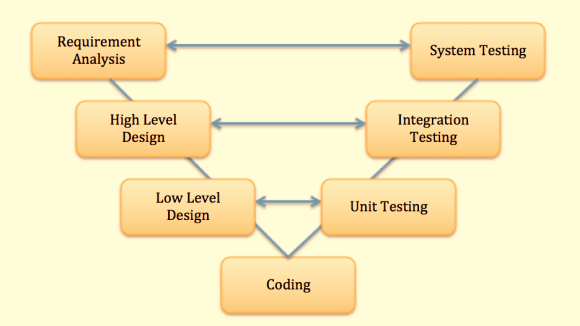

If you are wondering how long each type of test should take to give feedback, you can build your own process basing on this graph:

For how long should the team keep the test reports ?

Depends of how many times you run the tests through the day. There is not a rule for that, so you need to find what is the best option for you and your team. In my team we keep the test reports until the 15th run on Jenkins and after this we discard the report and the logs. In most of the cases, I’ve found that if something goes back more than 3 major versions, look for more resolution is a waste of time.

If regressions are reported as soon as they’re observed, the reporting should include the first known failing build and the last known good build. Ideally these are sequential, but this isn’t necessarily the case. Some people like to archive the old reports outside Jenkins. I didn’t feel the need for this until now, but is up to you to keep these reports outside Jenkins.

Resources:

http://istqbexamcertification.com/why-is-early-and-frequent-feedback-in-agile-methodology-important/