Renata Andrade is a software quality champion. She helps teams build the best strategy to practice quality in the easiest, fastest and most reliable way possible, adding more value to the business.

Driven by excellence, innovation and creativity, Renata loves making a difference, learning, sharing knowledge, and inspiring people. She likes working in a high tech environment with difficult challenges to solve. Renata also love attending conferences, reading books, writing blog posts, and giving talks about diverse topics in this area.

Be sure to follow Renata on LinkedIn.

TL;DR;

Renata Andrade chose to immigrate to the USA after falling in love with San Francisco during a visit. She saved money, studied English in New Zealand, and eventually got a job at a consulting company in San Francisco. In 2018, she applied for and obtained an H1B visa sponsored by the company. She highlights the respectful atmosphere and the vibrant tech scene in Silicon Valley as reasons for choosing to live there. She also discuss cultural differences in work relations and the fast-paced nature of companies in Silicon Valley. Renata Andrade shares advice for those looking to relocate and emphasises the importance of understanding the cultural differences beforehand. She notes that the perception and importance placed on quality assurance in the USA can vary, but generally, Brazilian QAs are more prepared. She mentions tools and practices like git, code reviews, and Devops that have improved their QA processes. She also highlights the value of a solid software engineering foundation and the lessons learned in Brazil. The work-life balance in the USA is seen as better, with flexible working hours, summer Fridays, and unlimited paid time off being common. Professional expectations vary but are generally similar.

What were your reasons for choosing to immigrate to the USA? How did you prepare for finding a job and planning your move?

I visited San Francisco in 2014 and got in love with the city. People here are really respectful to each other and I could sense that as soon as I got here. Personally, I like exploring new places and getting to experience new cultures. I didn’t speak any English at that time and I had no idea how the immigration process was. It was the first time I wanted to live somewhere different from my hometown (I’m from Belo Horizonte, Minas Gerais and I do love Brazil). So I started to save money and in 2015 I went to New Zealand for 6 months to study English. When I got back to Brazil, I got a job at a consulting company from San Francisco – California.

We’d speak English between ourselves and work for American clients. The company didn’t have any American visa programs back then, but in 2018 it started one, I applied for it and I got selected in the H1B visa lottery sponsored by the company (this is one way to immigrate to the US). They had a whole relocation program and it was pretty smooth to move with their support. I already got here working for one of their clients, they provided accommodation for 3 months and since there were other Brazilians at the company, the general experience was shared between us.

Also I had come to the US, San Francisco itself in 2016 and 2017 through this company and I got really familiar with everything. It was my choice to live in San Francisco specifically since I visited it the first time in 2014; I wouldn’t choose anywhere else. I really admire not only the respect factor that I mentioned at the beginning and it’s also cool to be in Silicon Valley. Everything here is super tech, we see people riding their one wheels or electric scooters, having conversations on their AirPods everywhere (even inside the public transportation) wearing their companies’ t-shirts (like Mozilla, Instagram, Google, Netflix) and backpacks. Not to mention that I live within 10 min distance from so many tech companies such as Meta, Google, IBM, Visa, Playstation, Fanatics, Rakuten, Oracle. I love it! A few weeks ago, I saw a driverless car!!! Yes. A car, driving without a driver!!! That blew my mind and I couldn’t be at a better place for myself.

If I could offer some advice, I’d recommend that people looking to relocate, if possible, visit the place before and get a sense of what the place looks like. Of course each experience is unique, and there are many factors that can impact your relocation such as climate, transportation, food, leisure, culture, etc and it’s good to understand a bit of how these things work so you don’t surprise yourself when you move. Moving to another country sometimes feels like you don’t belong to the new place and neither to the old place. It’s not an easy process – but it’s equally amazing.

What are some of the cultural differences you’ve encountered in the new workplace ?

We usually have the impression that Americans are workaholics. I don’t see that. They don’t have work regulations as Brazilians do – such as max 40hs per week, 30 days of vacation, increased rate for overtime hours after 10pm or weekends/holidays etc. That doesn’t make them ruleless people. In fact, I notice they are way more straight forward.

Their work relations are real work relations. You are hired, you have your benefits (or not), you can request vacation at any time (depending on the company’s policy), you can quit at any moment, no notice required, no bureaucracy, no questions asked. Here, in Silicon Valley, it is really easy to start a company, they offer a lot of financial incentives and because of that I feel that they think too fast. Their pace and creativity is way different from Brazilians because it’s easier and it’s how they behave, so people are impacted by this “culture” of entrepreneurship. At the same time, they just want to do their jobs. When they are done, they are done. And they move on.

So, to recap, the work relations are simple and straightforward, the main difference for me is the pace that things get done or change – everything is super fast. And I’d say, because there are a lot of immigrants, you do experience a lot of differences depending on the team/company you work with. These differences could be: the way you communicate, the team’s interactions, even the quality of the work.

Outside of the worklife, I can say that the buying power here is very different from Brazil. You can get appliances, clothes, phones, and cars much easier than in Brazil. At some point you realize you have way too many things, and not a lot of space to store them… hahaha 😂

Are there any specific challenges you faced when adapting to the QA practices and standards in the USA ? How did you overcome them?

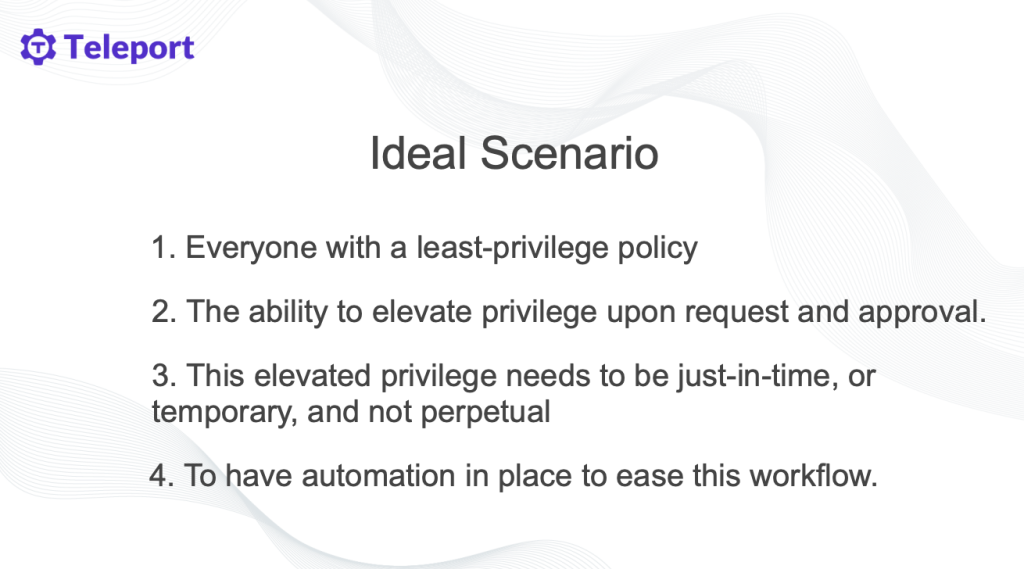

I don’t believe there were challenges related to the QA practices themselves, but I did face some challenges in explaining to leaders the importance of the QA related practices. For instance, when I say that we need to improve the testing framework because it’s taking too long to run or to develop a new test, leaders don’t understand it.

I need to be extra product oriented or wait for issues to happen to make them understand the importance of it. Most of the time, I use numbers to exemplify my theories, for example: when I needed to hire more QAs for my team, I gathered the time I was spending with each activity, the time to automate them, and the impacts of not doing them. I usually offer a few options, like: a best case scenario, an “ok” scenario and lastly a “not ideal but acceptable” scenario (more often, just 2 options so they don’t spend too much time making a decision).

Another technique is to use benchmarking; bringing to the table how company A, B or C does something is also helpful, sometimes this is not easy to get this data. I have to be honest here that I haven’t been super successful with these approaches. I had some episodes where issues happened in production, and then, finally they understood what I was trying to say. My guess was that because things here happen too fast and companies tend to follow each other’s examples, companies hire QAs and they don’t really understand why they need them (sometimes they think it’s just to manual test things). But overall the QA practices are pretty similar.

Have you noticed any variations in the perception or importance placed on quality assurance compared to Brazil? If so, in what ways?

I have mixed opinions about this topic, because the US is really big and it has a lot of companies. My first impression when I started to work for American companies from Brazil, was that most of the tools and technologies were the trending top ones. And we could also guess that since most of the QAs that are writing and speaking about software quality are Americans.

But when you really start working with it, you realize that it’s very diverse. I’ve been working with really high performance teams, really smart people, and with below average teams too. Talking about the quality practices specifically, I see that it’s way behind the development practices in terms of people’s knowledge. I feel that Brazilian QAs are more prepared in general.

Are there any new unique methodologies or tools that you’ve come across that have improved your QA processes?

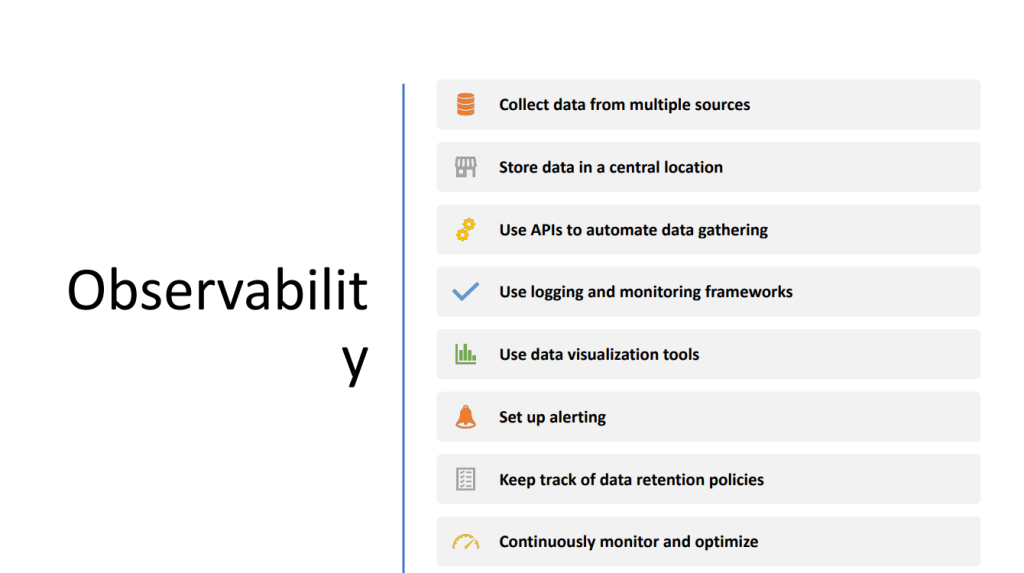

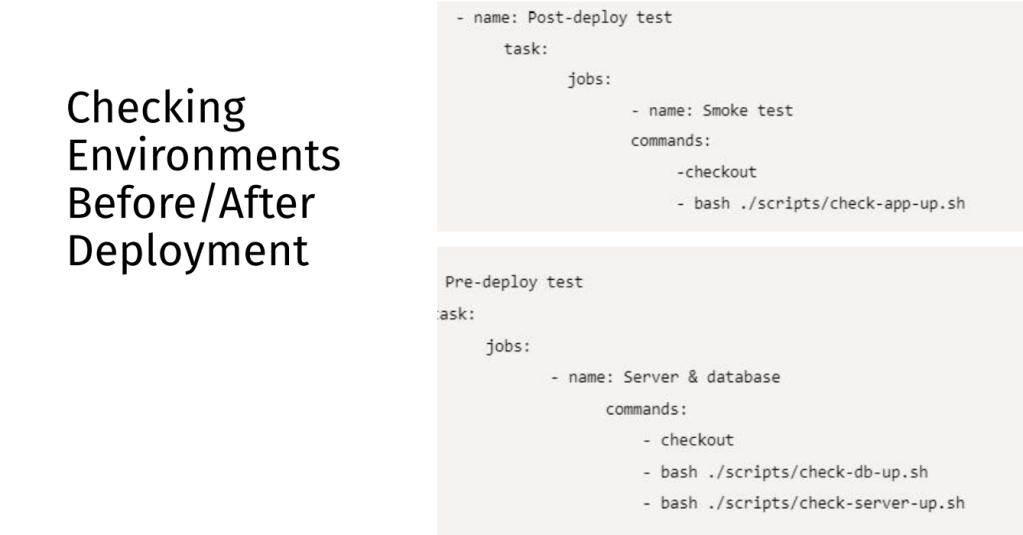

Yes, I got really good at git practices, code reviews, Devops. I think the opportunity to work close to dev teams and fulfil responsibilities that are usually not QAs’ made me grow a lot. Living in the Silicon Valley and having the proximity to tech companies also helped because I see ads everywhere and kind of make me curious about them and at least understand what they do. Going to meetups also is another point, that makes me get to know a lot of tools and practices.And we can definitely find that in Brazil too, but I’d say, because of the pace here, it speeded up the process a lot.

A great example here is Playwright. It was suggested by a developer when we were deciding which tool to use and it ended up becoming the tool we adopted and my very favorite tool. I have worked with many tools such as Selenium, WebdriverIO, TestCafe (which is also really good – it has the best locator strategy in my opinion), but the speed and simplicity of Playwright are really impressive and make things sooo much easier for QAs and Devs!

I liked it so much that I now have some Playwright courses in English and Portuguese!

Are there any specific lessons or skills you learned in Brazil that you find particularly valuable in the USA ?

Brazilians are really hard workers. It’s pretty honorable ❤️. Also, the universities and training are really good in Brazil. So I think building a solid software engineering foundation was pretty valuable. Because that can guarantee that you are employable. The job market can get competitive so that’s great before getting in the US. Not to mention that the solving problems ability scales a lot by having a good foundation.

And also important to share the lessons and skills I “didn’t” learn, which is that non-Americans from certain industries, including IT, can apply for their own green-card (which allows you to stay in the US permanently) independently. This green-card category is called EB2-NIW. Without a green-card, you need to have a visa that it’s linked to a company or school and this expires around 6 years after you arrive in the US (so, you need to return to your country after it expires). The temporary visa has a lot of limitations, so the green-card is a great option in this case. Had I known that before, my life would have been so much easier.

Have you noticed any differences in the work-life balance or professional expectations ?

It’s funny to say, but Americans work less ahahah, compared to Brazil. There is no mandatory “lunch time” so, you can work 8 hours straight and still have lunch and breaks. You are naturally pushed to learn more and deliver during your productive time, but not necessarily work more. Another funny thing is that happy-hours in the US are usually from 2 to 6pm.

So, if you are going to a happy hour, you leave work earlier for that. Since the weather is also pretty different from Brazil, some companies have summer Fridays, and they allow employees to leave earlier during the summer. Another great thing about it is the Unlimited Paid Time. A lot of companies offer that and this means that you can go on vacation for as many days as you want per year and still get paid.

Of course your manager has to approve and if you don’t “deliver your work” you get fired, but if you are doing a good job, it’s pretty useful. There is no ⅓ of your salary for vacations, but you get paid as you were normally working.I had almost 2 months of vacation on my last job 🙌.

Professional expectations vary a lot, depending on the company, team, etc. In general I’d say it’s pretty similar.

And that’s a wrap ! Thanks Renata, if you have any questions, feel free to contact her 🙂